Home

Discover McSCert

Research Our Research

Through research, McSCert creates methodologies and tools that revolutionize the process of certifying critical software applications and facilitates the development of critical software applications.

Services View Services

McSCert offers a variety of services, including consulting, contract research and development, contract software certification, training and more. We partner with a broad range of clients.

Publications Recent Publications

McSCert researchers are academic and industry leaders. View our publications to learn more about the key topics our researchers are currently working on.

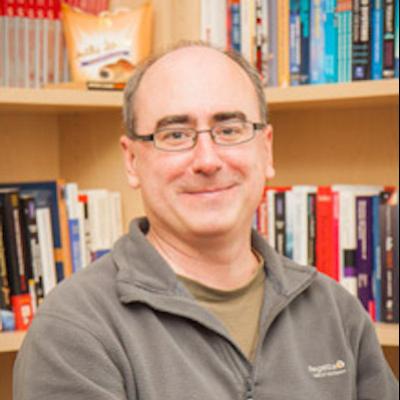

Director

MESSAGE FROM THE DIRECTOR

Welcome to the McMaster’s Centre for Software Certification (McSCert).

Our world leading group of researchers, faculty, and industrial partners are dedicated to the certification of software-intensive systems, as well as the design of such systems to enable certification.

With a cumulative experience of over 30 years, our faculty members have been actively engaged with various industries, addressing certification challenges in diverse sectors such as nuclear power generation, medical devices, automotive, aerospace, avionics, telecommunications, finance, and beyond. Our dynamic collaborations with industry partners and regulators involve over 80 students and researchers working in these and other domains.

The research environment at McSCert is not only intellectually stimulating but also well-equipped with cutting-edge technology augmented by access to industry partners who are leading the way in developing and deploying critical systems.

Whether you are a student, researcher, industry partner, regulator, or a start-up company, please get in touch. We’d love to hear from you and explore new possibilities with you!

Safe, Secure and Dependable Software

McSCert develops tools and methods to create certifiably safe, secure and dependable software. This work is urgently needed for software-intensive mission-critical systems where software failure can have devastating physical, financial or political consequences.

Software is used to control medical devices, automobiles, aircraft, manufacturing plants, nuclear generating stations, space exploration systems, elevators, electric motors, trains, banking transactions, telecommunications devices and a growing number of devices in industry and in our homes.